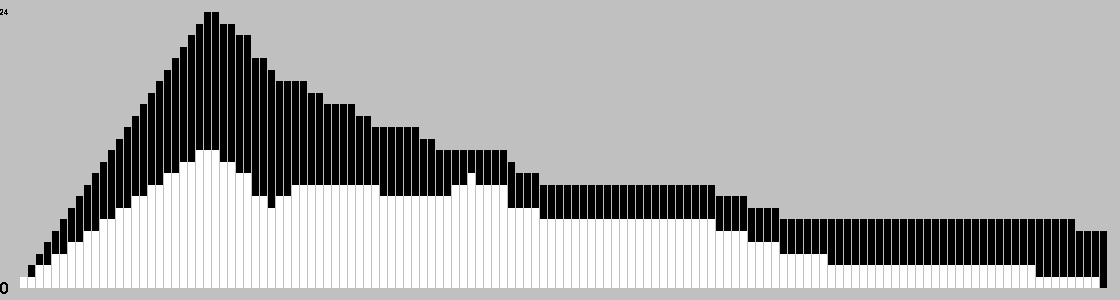

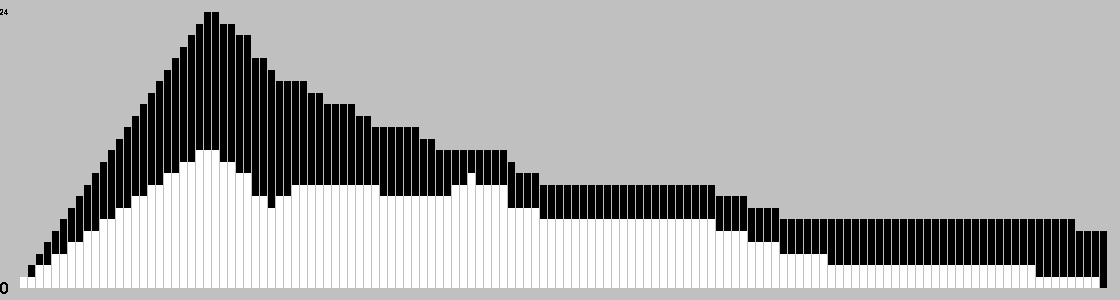

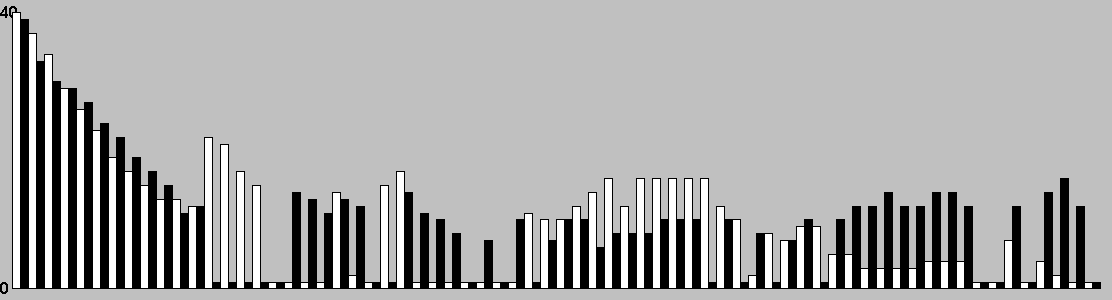

Change in Material Per Turn

This chart is based on a single playout, and gives a feel for the change in material over the course of a game.

Capture is obligatory during both entering and movement phases. To capture, jump an orthogonally adjacent enemy piece, landing on the next space in line (which must be empty). After a capture, you must continue to capture if possible, but may not make a 180 degree turn (no immediate backtracking). If there is a choice of captures, you must make the longest sequence of jumps possible.

If no capture is possible, you may add a single piece to a vacant space, subject to the following restrictions:

If there are no offboard pieces and no captures, you may move a piece one space. All movement is orthogonal.

If a player has no remaining pieces, they lose.

If a player has no legal moves on their turn, the game is a draw.

At the heart of this implementation is both an ownership bitboard for efficient move calculation, plus a separate array for stack components. Hashing, maximal captures, and the unusual board layout were all problematical; but everything worked out well in the end. I still haven't beaten the AI at 1s/move so be prepared to set the time limit very low!

General comments:

Play: Combinatorial

Family: Draughts/Checkers games,Traditional

Mechanism(s): Capture,Movement

Components: Board

Level: Standard

| BGG Entry | Emergo |

|---|---|

| BGG Rating | 7.18462 |

| #Voters | 26 |

| SD | 1.60113 |

| BGG Weight | 4 |

| #Voters | 5 |

| Year | 1986 |

| User | Rating | Comment |

|---|---|---|

| Colonnello Vincent | 7 | |

| unic | 5 | |

| gmcnish | N/A | Freeling's favourite design 9x9, 2x12 stackable |

| ProgressorM | 6.7 | |

| mrraow | 9 | A big game on a small board (referring especially to the 7x7 square board version here). Truly a brain burner, with forced sequences at every turn. |

| CarlosLuna | 9 | |

| GornTC | 8 | I should make a board for this. I like this one. |

| AbstractStrategy | 8 | Amazing game!!! I'm not sure how a human brain can possibly strategise to win it but it's a lot of fun nonetheless. Reminds me of Bashni which I also love although I found that slightly easier to strategise. I should note that this rating is for the hex board version as I have not played it with the standard geometry. |

| kataclysm | N/A | The setup is just like Zertz. Need to find detailed rules and try out. |

| fogus | 6.5 | |

| Tony van der Valk | 10 | Just play this game on a normal 8 x 8 chessboard; its fine. Although the 9 x 9 board is on my wishlist. |

| CDRodeffer | 8 | Excellent abstract, and one of the best tower games ever devised. Highly recommended. The square board version is much better than the hexagonal one. |

| wiseguy | N/A | Gameboard originally printed in GAMES Magazine issue from Feb. 1986. |

| mocko | 8 | Just played this for the first time, against The Man Himself. After an initial feeling that I would never get the hang, I began to relax and admire. |

| megamau | 6.8 | The good thing: fascinating abstract. The bad thing: too many forced moves. It is very difficult to follow the tactics, let alone the strategy. And I only played by e-mail.....I assume over the board the problem would be worse. |

| dooz | 6 | |

| Nap16 | 4.5 | Free Print & Play version of an abstract game played on a 9x9 grid/board. |

| molnar | N/A | Freeling's pretty good; I'd like to try this. |

| mafko | 7.5 | |

| Wilco | 8 | |

| StewartTame | 6 | Decent abstract. If I play again, I need to remember that jng stacks with two or more of my opponent's pieces on top is NOT a good idea. |

| camisdad | N/A | make my own prtoject under way |

| Zickzack | 7.5 | My gut feeling so far is that Emergo is better than Laska, but not as good as Bashni. Recommended order of approach: [GameID=6862], [GameID=36550], then Emergo. The game is difficult to rate. The opening phase is opaque. At the beginning, I thought it was a bug. Now, I think it is a feature. Comparisons with games like [GameID=528] and Laska indicate that Emergo got it right, whereas those games got it wrong. Laska and ZÈRTZ can be attacked with brute force, and that is definitely not a feature. Freeling invented a simple opening protocol that prevents this and results in a completely new game. There are surprisingly few games where the board is filled first and emptied later. Most games work by either filling (e.g. Go) or emptying (e.g. Checkers). The archaic Morris games form an exception, and Emergo now, too. In my current opinion, the opening phase should be seen as the core game. The second phase is usually determined by the former, especially in correspondence play. |

| PnP Game Selector | N/A | Described in this Geeklist http://www.boardgamegeek.com/geeklist/26882/item/545897#item545897 |

| orangeblood | 8 | Really an amazing and deep game. With the placement and movement phases, it feels like two games in one. Initial rating of 8. ------- I'm using a 10x10 draughts board where I marked off the appropriate 9x9 area with a Sharpie. Until I get the proper checkers, GIPF pieces work well. |

| bellaatriks | 6 | |

| dralius | 8 | |

| schwarzspecht | 7 | |

| kc2dpt | 7 | |

| mjf71 | N/A | 2 45m |

| Windu47 | N/A | 1 |

| ed_in_play | N/A | printed board to use with focus or domination pieces |

| El Diabolo | 8.5 | This game is a lesson in the "law of unintended consequences." Very simple rule set leads to wildly subtle and sophisticated strategy. |

| FiveStars | 2 | After the opening Emergo is like a Lasca endgame played on a slightly larger board. I call it "castrated Lasca". |

| The Player of Games | 8.5 | Very interesting game! Not quite like any I have played before as I have not played any of the stacking Checkers variants before this. At best, this reminds me of a hybrid of Checkers and Tak. There is plenty of depth here. Need to play more. Preliminary rating. May go up with more plays. |

| AI | Strong Wins | Draws | Strong Losses | #Games | Strong Win% | p1 Win% | Game Length |

|---|---|---|---|---|---|---|---|

| Random | |||||||

| Rαβ + ocqBKs (t=0.01s) | 35 | 2 | 0 | 37 | 97.30 | 45.95 | 73.08 |

| Rαβ + ocqBKs (t=0.07s) | 31 | 10 | 5 | 46 | 78.26 | 58.70 | 134.61 |

| Rαβ + ocqBKs (t=0.55s) | 32 | 8 | 6 | 46 | 78.26 | 60.87 | 118.61 |

Level of Play: Strong beats Weak 60% of the time (lower bound with 90% confidence).

Draw%, p1 win% and game length may give some indication of trends as AI strength increases; but be aware that the AI can introduce bias due to horizon effects, poor heuristics, etc.

| Size (bytes) | 37964 |

|---|---|

| Reference Size | 10293 |

| Ratio | 3.69 |

Ai Ai calculates the size of the implementation, and compares it to the Ai Ai implementation of the simplest possible game (which just fills the board). Note that this estimate may include some graphics and heuristics code as well as the game logic. See the wikipedia entry for more details.

| Playouts per second | 32068.45 (31.18µs/playout) |

|---|---|

| Reference Size | 1964636.54 (0.51µs/playout) |

| Ratio (low is good) | 61.26 |

Tavener complexity: the heat generated by playing every possible instance of a game with a perfectly efficient programme. Since this is not possible to calculate, Ai Ai calculates the number of random playouts per second and compares it to the fastest non-trivial Ai Ai game (Connect 4). This ratio gives a practical indication of how complex the game is. Combine this with the computational state space, and you can get an idea of how strong the default (MCTS-based) AI will be.

| 1: White win % | 57.90±3.08 | Includes draws = 50% |

|---|---|---|

| 2: Black win % | 42.10±3.02 | Includes draws = 50% |

| Draw % | 24.00 | Percentage of games where all players draw. |

| Decisive % | 76.00 | Percentage of games with a single winner. |

| Samples | 1000 | Quantity of logged games played |

Note: that win/loss statistics may vary depending on thinking time (horizon effect, etc.), bad heuristics, bugs, and other factors, so should be taken with a pinch of salt. (Given perfect play, any game of pure skill will always end in the same result.)

Note: Ai Ai differentiates between states where all players draw or win or lose; this is mostly to support cooperative games.

| Label | Its/s | SD | Nodes/s | SD | Game length | SD |

|---|---|---|---|---|---|---|

| Random playout | 35,253 | 156 | 3,732,132 | 13,584 | 106 | 52 |

| search.UCB | 36,276 | 627 | 108 | 36 | ||

| search.UCT | 36,225 | 758 | 119 | 41 | ||

| search.Minimax | 1,997,172 | 49,021 | 154 | 55 | ||

| search.AlphaBeta | 373,247 | 58,061 | 138 | 47 |

Random: 10 second warmup for the hotspot compiler. 100 trials of 1000ms each.

Other: 100 playouts, means calculated over the first 5 moves only to avoid distortion due to speedup at end of game.

Rotation (Half turn) lost each game as expected.

Reflection (X axis) lost each game as expected.

Reflection (Y axis) lost each game as expected.

Copy last move lost each game as expected.

Mirroring strategies attempt to copy the previous move. On first move, they will attempt to play in the centre. If neither of these are possible, they will pick a random move. Each entry represents a different form of copying; direct copy, reflection in either the X or Y axis, half-turn rotation.

| Game length | 131.39 | |

|---|---|---|

| Branching factor | 11.01 | |

| Complexity | 10^104.79 | Based on game length and branching factor |

| Samples | 1000 | Quantity of logged games played |

Computational complexity (where present) is an estimate of the game tree reachable through actual play. For each game in turn, Ai Ai marks the positions reached in a hashtable, then counts the number of new moves added to the table. Once all moves are applied, it treats this sequence as a geometric progression and calculates the sum as n-> infinity.

| Distinct actions | 955 | Number of distinct moves (e.g. "e4") regardless of position in game tree |

|---|---|---|

| Killer moves | 194 | A 'killer' move is selected by the AI more than 50% of the time Too many killers to list. |

| Good moves | 465 | A good move is selected by the AI more than the average |

| Bad moves | 179 | A bad move is selected by the AI less than the average |

| Terrible moves | 102 | A terrible move is never selected by the AI Too many terrible moves to list. |

| Samples | 1000 | Quantity of logged games played |

This chart is based on a single playout, and gives a feel for the change in material over the course of a game.

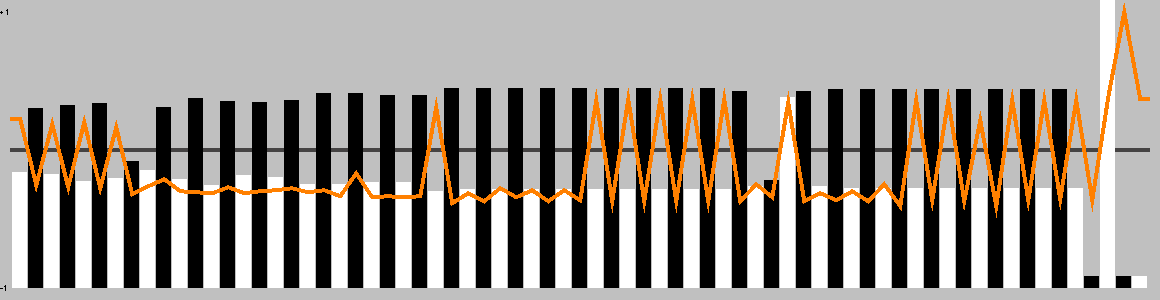

This chart shows the best move value with respect to the active player; the orange line represents the value of doing nothing (null move).

The lead changed on 8% of the game turns. Ai Ai found 6 critical turns (turns with only one good option).

Overall, this playout was 73.24% hot.

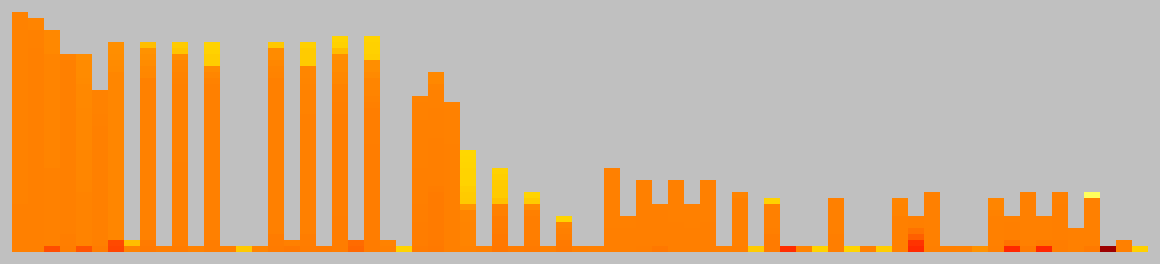

This chart shows the relative temperature of all moves each turn. Colour range: black (worst), red, orange(even), yellow, white(best).

| Measure | All players | Player 1 | Player 2 |

|---|---|---|---|

| Mean % of effective moves | 81.77 | 70.41 | 93.46 |

| Mean no. of effective moves | 7.63 | 7.00 | 8.29 |

| Effective game space | 10^36.55 | 10^16.75 | 10^19.80 |

| Mean % of good moves | 36.33 | 66.89 | 4.89 |

| Mean no. of good moves | 3.72 | 7.19 | 0.14 |

| Good move game space | 10^17.76 | 10^17.46 | 10^0.30 |

These figures were calculated over a single game.

An effective move is one with score 0.1 of the best move (including the best move). -1 (loss) <= score <= 1 (win)

A good move has a score > 0. Note that when there are no good moves, an multiplier of 1 is used for the game spce calculation.

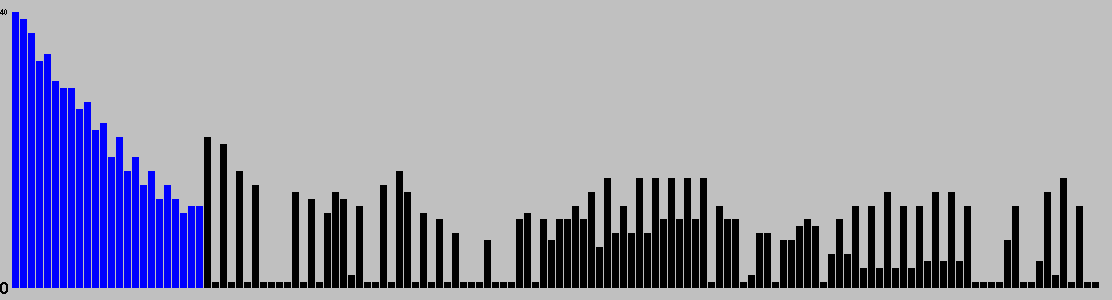

Table: branching factor per turn.

This chart is based on a single playout, and gives a feel for the types of moves available over the course of a game.

Red: removal, Black: move, Blue: Add, Grey: pass, Purple: swap sides, Brown: other.

| 0 | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|

| 1 | 40 | 1544 | 30822 | 546265 | 6567575 |

Note: most games do not take board rotation and reflection into consideration.

Multi-part turns could be treated as the same or different depth depending on the implementation.

Counts to depth N include all moves reachable at lower depths.

Inaccuracies may also exist due to hash collisions, but Ai Ai uses 64-bit hashes so these will be a very small fraction of a percentage point.

No solutions found to depth 5.

| Moves | Animation |

|---|---|

| f8,f6,c9,e9,g7,f6xh8,g7,h8xf6,g7,f6xh8 |  |

| b6,d6,a3,a5,c7,d6xb8,c7,b8xd6,c7,d6xb8 |  |

| f8,f6,c9,e9,g7,f6xh8,g7,h8xf6,g7 |  |

| b6,d6,a3,a5,c7,d6xb8,c7,b8xd6,c7 |  |

| f8,f6,c9,e9,g7,f6xh8,g7,h8xf6 |  |

| h2,f2,i5,i3,g3,f2xh4,i5xg3,i3xg1 |  |

| i5,f2,h2,i3,g3,f2xh4,i5xg3,i3xg1 |  |

| b6,d6,a3,a5,c7,d6xb8,c7,b8xd6 |  |

| f8,f6,c9,e9,g7,f6xh8,g7 |  |

| h2,f2,i5,i3,g3,f2xh4,i5xg3 |  |

| i5,f2,h2,i3,g3,f2xh4,i5xg3 |  |

| b6,d6,a3,a5,c7,d6xb8,c7 |  |

| f8,f6,c9,e9,g7,f6xh8 |  |

| h2,f2,i5,i3,g3,f2xh4 |  |

| i5,f2,h2,i3,g3,f2xh4 |  |

| b6,d6,a3,a5,c7,d6xb8 |  |

| f8,f6,c9,e9,g7 |  |

| h2,f2,i5,i3,g3 |  |

| i5,f2,h2,i3,g3 |  |

| b6,d6,a3,a5,c7 |  |

| f8,f6,c9,e9 |  |

| h2,f2,i5,i3 |  |

| i5,f2,h2,i3 |  |

| b6,d6,a3,a5 |  |

| g9,b4,d6 |  |

| f4,e9,e5 |  |

| d6,b6,f8 |  |

Colour shows the success ratio of this play over the first 10moves; black < red < yellow < white.

Size shows the frequency this move is played.

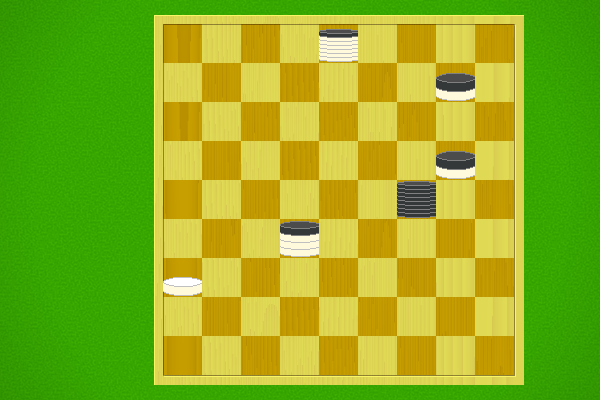

| Puzzle | Solution |

|---|---|

Black to win in 3 moves |

Selection criteria: first move must be unique, and not forced to avoid losing. Beyond that, Puzzles will be rated by the product of [total move]/[best moves] at each step, and the best puzzles selected.