Playout/Search Speed

| Label | Its/s | SD | Nodes/s | SD | Game length | SD |

|---|---|---|---|---|---|---|

| Random playout | 4,356 | 380 | 336,948 | 29,289 | 77 | 6 |

| search.UCB | 4,425 | 294 | 69 | 6 | ||

| search.UCT | 4,495 | 297 | 68 | 5 |

| Game length | 68.45 | |

|---|---|---|

| Branching factor | 41.48 | |

| Complexity | 10^104.26 | Based on game length and branching factor |

| Samples | 1000 | Quantity of logged games played |

Move Classification

| Distinct actions | 125 | Number of distinct moves (e.g. "e4") regardless of position in game tree |

|---|---|---|

| Good moves | 51 | A good move is selected by the AI more than the average |

| Bad moves | 73 | A bad move is selected by the AI less than the average |

| Samples | 1000 | Quantity of logged games played |

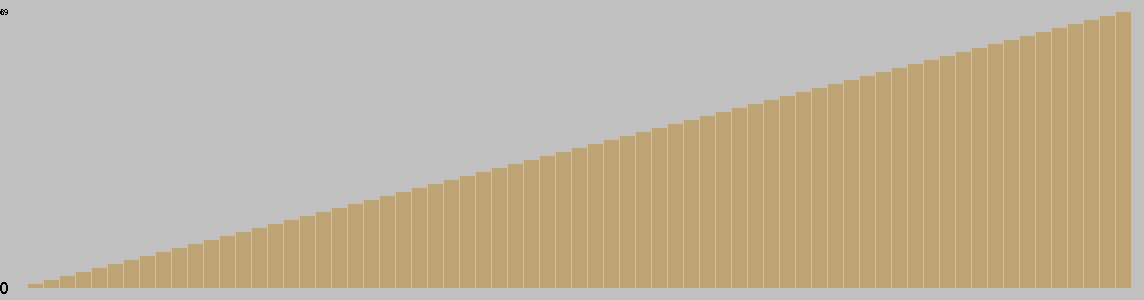

Change in Material Per Turn

This chart is based on a single playout, and gives a feel for the change in material over the course of a game.

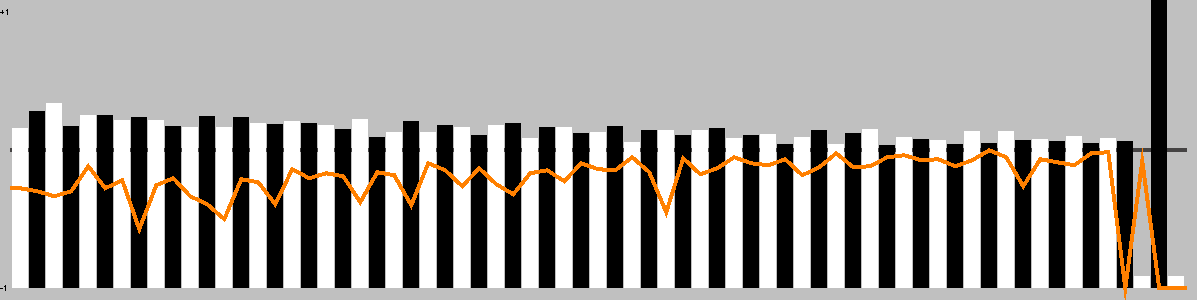

Trajectory

This chart shows the best move value with respect to the active player; the orange line represents the value of doing nothing (null move).

The lead changed on 65% of the game turns. Ai Ai found 10 critical turns (turns with only one good option).

Overall, this playout was 95.65% hot.

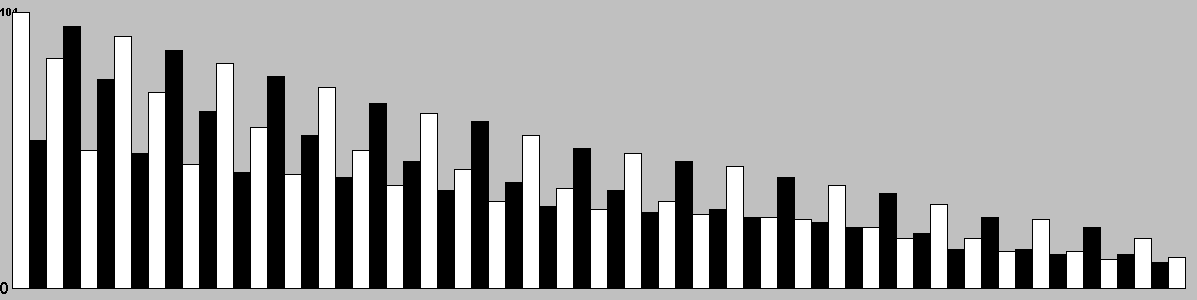

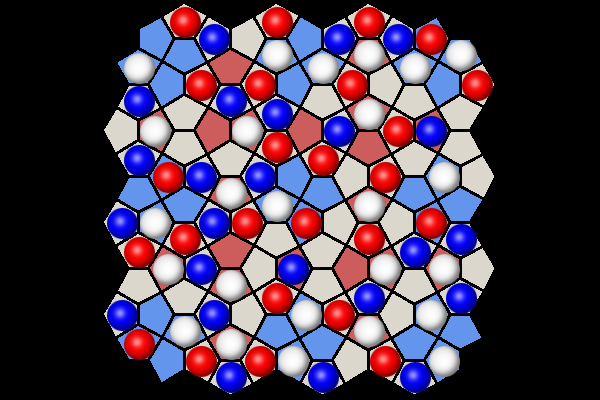

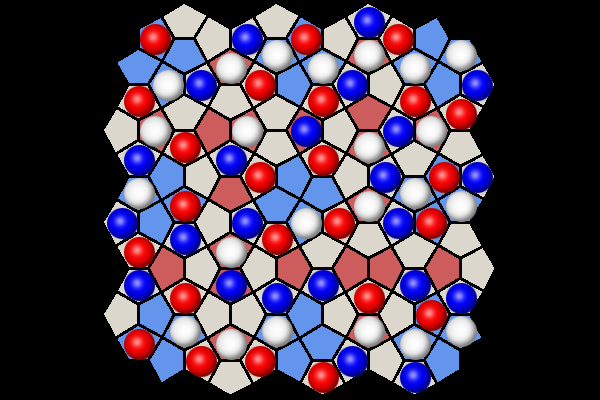

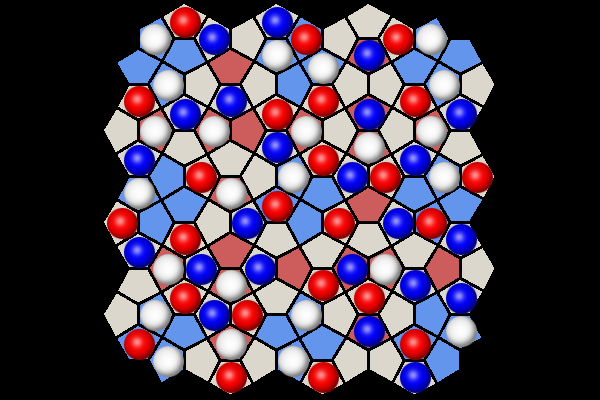

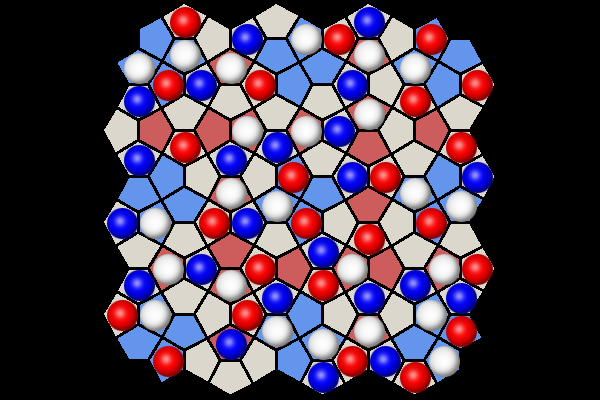

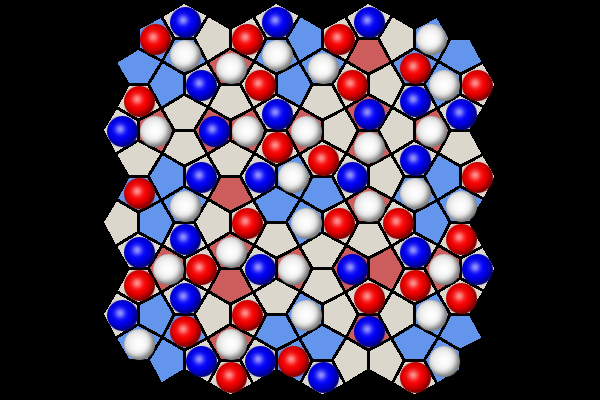

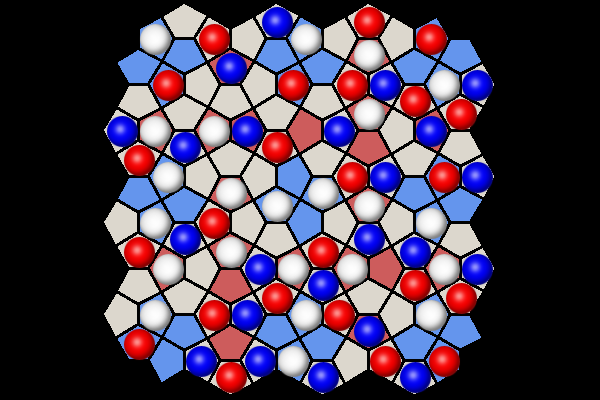

Position Heatmap

This chart shows the relative temperature of all moves each turn. Colour range: black (worst), red, orange(even), yellow, white(best).

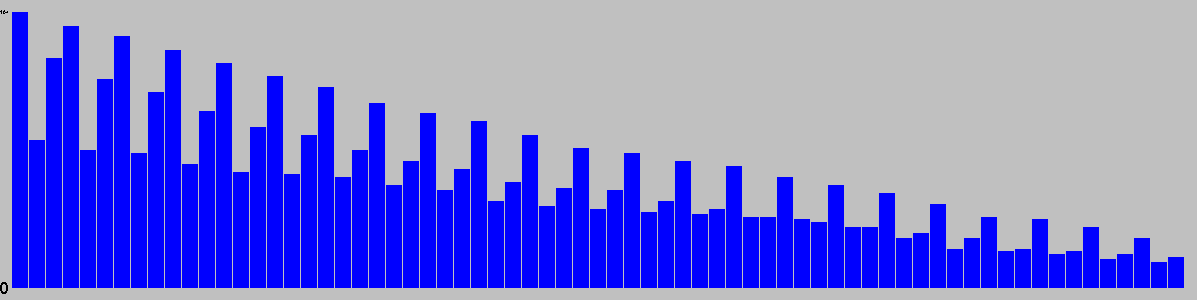

Actions/turn

Table: branching factor per turn.

Action Types per Turn

This chart is based on a single playout, and gives a feel for the types of moves available over the course of a game.

Red: removal, Black: move, Blue: Add, Grey: pass, Purple: swap sides, Brown: other.

Unique Positions Reachable at Depth

| 0 | 1 | 2 | 3 |

|---|---|---|---|

| 1 | 104 | 5892 | 509348 |

Note: most games do not take board rotation and reflection into consideration.

Multi-part turns could be treated as the same or different depth depending on the implementation.

Counts to depth N include all moves reachable at lower depths.

Inaccuracies may also exist due to hash collisions, but Ai Ai uses 64-bit hashes so these will be a very small fraction of a percentage point.

Shortest Game(s)

No solutions found to depth 3.

Openings

| Moves | Animation |

|---|---|

| f1E,g7N,c8W |  |

| b5E,h4N,b2N |  |

| b8S,h5W,f6N |  |

| b2S,f6N |  |

| c2W,e1N |  |

| e2E,a7N |  |

| a3S,e6W |  |

| a3N,e6W |  |

| b3E,f2S |  |

| h3E,b4N |  |

| b4S,g8E |  |

| e5N,h1W |  |

Puzzles

| Puzzle | Solution |

|---|---|

White to win in 7 moves | |

White to win in 3 moves | |

Black to win in 5 moves | |

Black to win in 3 moves | |

White to win in 6 moves | |

White to win in 7 moves |

Selection criteria: first move must be unique, and not forced to avoid losing. Beyond that, Puzzles will be rated by the product of [total move]/[best moves] at each step, and the best puzzles selected.