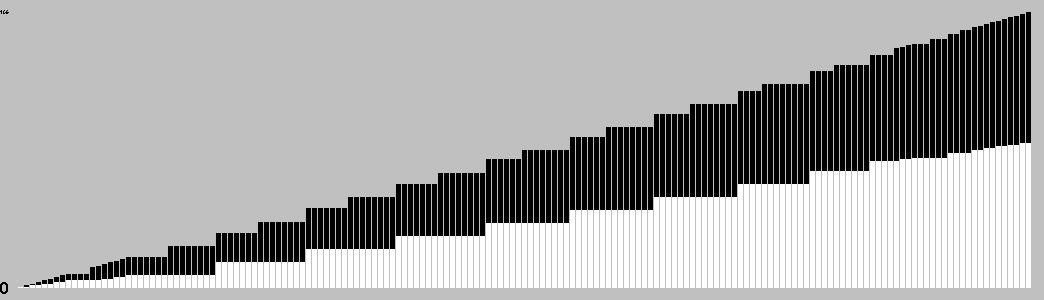

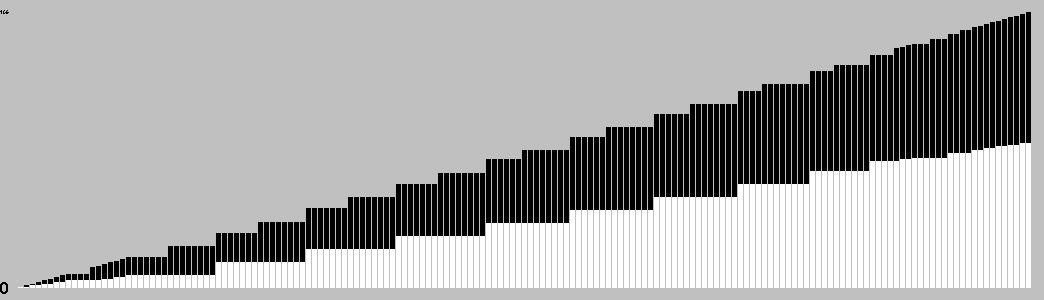

Change in Material Per Turn

This chart is based on a single playout, and gives a feel for the change in material over the course of a game.

Have the highest score at the end of the game.

Each turn, either:

When the board is full, you score as follows:

{Stones placed} - k x {number of groups}

Where k is a multiple of 4 chosen at the start of the game.

General comments:

Play: Combinatorial

Mechanism(s): Connection,Pattern

Components: Board

| BGG Entry | Symple |

|---|---|

| BGG Rating | 7.7 |

| #Voters | 6 |

| SD | 0.5 |

| BGG Weight | 0 |

| #Voters | 0 |

| Year | 2010 |

| User | Rating | Comment |

|---|---|---|

| luigi87 | 8.2 | |

| mrraow | 8 | Clever territory game; the branching factor is brutal, so don't expect a strong AI! |

| kevan | 7 | |

| milomilo122 | N/A | A beautiful idea that doesn't quite work as a game for me, because too many stones need to be placed on a single turn. When I have to place 8 stones on a turn in a deep game like this, it's a recipe for titanic analysis paralysis. |

| orangeblood | 8 | This is a very interesting design with mechanics that I've not seen before. You could call it a Go variant, but I think it’s less like Go than Blooms, for example (where territory and captures matter). As concisely as I can explain, on your turn you either place a single stone to start a new group, or add one stone to each of your existing groups. When the board is filled you score one point per stone, minus a predetermined number (e.g., 6 points) for every group you have. If you’re somewhat dense like me it will take you a few of plays to begin to understand the delicate early-game balance between starting new groups, vs. switching gears and adding to existing ones. The math tells you that the more groups you have the more stones you’ll eventually be able to add… and yet the higher group penalty points at the end. That brings up the entire strategy of positioning your stones — where Go-like play is generally rewarded. For instance, it’s nice if you could join up groups toward the end to avoid penalty points, or deny your opponent the same…. and to have staked out some territory to give your groups room to grow. Co-designed by Christian Freeling and Benedikt Rosenau, Christian says it’s one of only six games of his that he considers relevant. Or, as he also says, in as much as abstract games matter, this one matters. |

| simpledeep | 8 | |

| rchandra | 7 |

| AI | Strong Wins | Draws | Strong Losses | #Games | Strong Win% | p1 Win% | Game Length |

|---|---|---|---|---|---|---|---|

| Random | |||||||

| Grand Unified UCT(U1-T,rSel=u, secs=0.01) | 36 | 0 | 0 | 36 | 100.00 | 52.78 | 169.08 |

| Grand Unified UCT(U1-T,rSel=u, secs=0.03) | 36 | 0 | 5 | 41 | 87.80 | 43.90 | 169.02 |

| Grand Unified UCT(U1-T,rSel=u, secs=0.07) | 36 | 0 | 14 | 50 | 72.00 | 54.00 | 169.00 |

| Grand Unified UCT(U1-T,rSel=u, secs=0.20) | 36 | 0 | 5 | 41 | 87.80 | 48.78 | 169.00 |

Level of Play: Strong beats Weak 60% of the time (lower bound with 90% confidence).

Draw%, p1 win% and game length may give some indication of trends as AI strength increases; but be aware that the AI can introduce bias due to horizon effects, poor heuristics, etc.

| Size (bytes) | 32362 |

|---|---|

| Reference Size | 10293 |

| Ratio | 3.14 |

Ai Ai calculates the size of the implementation, and compares it to the Ai Ai implementation of the simplest possible game (which just fills the board). Note that this estimate may include some graphics and heuristics code as well as the game logic. See the wikipedia entry for more details.

| Playouts per second | 5027.77 (198.90µs/playout) |

|---|---|

| Reference Size | 2780094.52 (0.36µs/playout) |

| Ratio (low is good) | 552.95 |

Tavener complexity: the heat generated by playing every possible instance of a game with a perfectly efficient programme. Since this is not possible to calculate, Ai Ai calculates the number of random playouts per second and compares it to the fastest non-trivial Ai Ai game (Connect 4). This ratio gives a practical indication of how complex the game is. Combine this with the computational state space, and you can get an idea of how strong the default (MCTS-based) AI will be.

| 1: White win % | 51.40±3.10 | Includes draws = 50% |

|---|---|---|

| 2: Black win % | 48.60±3.09 | Includes draws = 50% |

| Draw % | 0.00 | Percentage of games where all players draw. |

| Decisive % | 100.00 | Percentage of games with a single winner. |

| Samples | 1000 | Quantity of logged games played |

Note: that win/loss statistics may vary depending on thinking time (horizon effect, etc.), bad heuristics, bugs, and other factors, so should be taken with a pinch of salt. (Given perfect play, any game of pure skill will always end in the same result.)

Note: Ai Ai differentiates between states where all players draw or win or lose; this is mostly to support cooperative games.

| Label | Its/s | SD | Nodes/s | SD | Game length | SD |

|---|---|---|---|---|---|---|

| Random playout | 5,152 | 33 | 870,731 | 5,546 | 169 | 0 |

| search.UCB | 5,214 | 49 | 169 | 0 | ||

| search.UCT | 5,201 | 50 | 169 | 0 |

Random: 10 second warmup for the hotspot compiler. 100 trials of 1000ms each.

Other: 100 playouts, means calculated over the first 5 moves only to avoid distortion due to speedup at end of game.

Rotation (Half turn) lost each game as expected.

Reflection (X axis) lost each game as expected.

Reflection (Y axis) lost each game as expected.

Copy last move lost each game as expected.

Mirroring strategies attempt to copy the previous move. On first move, they will attempt to play in the centre. If neither of these are possible, they will pick a random move. Each entry represents a different form of copying; direct copy, reflection in either the X or Y axis, half-turn rotation.

| Game length | 169.00 | |

|---|---|---|

| Branching factor | 37.54 | |

| Complexity | 10^208.74 | Based on game length and branching factor |

| Computational Complexity | 10^7.47 | Sample quality (100 best): 4.51 |

| Samples | 1000 | Quantity of logged games played |

Computational complexity (where present) is an estimate of the game tree reachable through actual play. For each game in turn, Ai Ai marks the positions reached in a hashtable, then counts the number of new moves added to the table. Once all moves are applied, it treats this sequence as a geometric progression and calculates the sum as n-> infinity.

| Distinct actions | 170 | Number of distinct moves (e.g. "e4") regardless of position in game tree |

|---|---|---|

| Good moves | 69 | A good move is selected by the AI more than the average |

| Bad moves | 101 | A bad move is selected by the AI less than the average |

| Samples | 1000 | Quantity of logged games played |

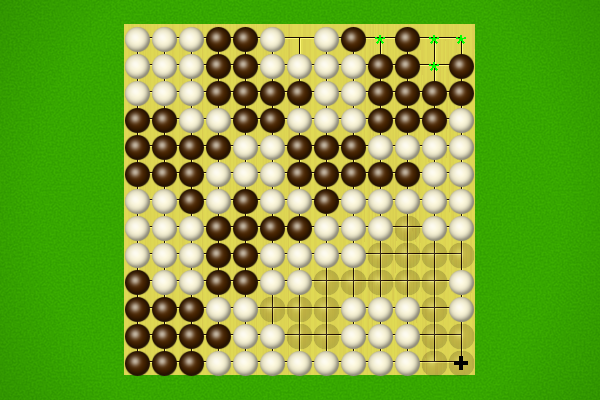

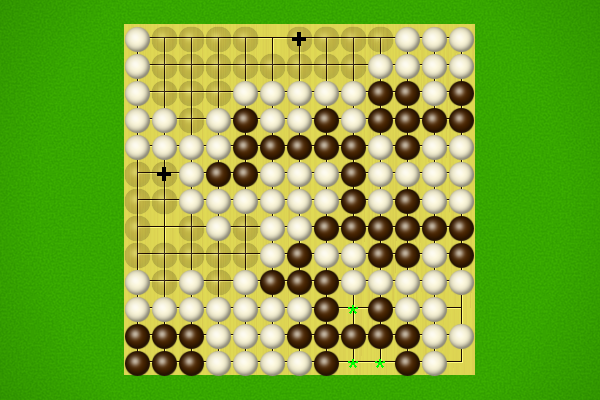

This chart is based on a single playout, and gives a feel for the change in material over the course of a game.

| 0 | 1 | 2 | 3 |

|---|---|---|---|

| 1 | 169 | 28561 | 2399293 |

Note: most games do not take board rotation and reflection into consideration.

Multi-part turns could be treated as the same or different depth depending on the implementation.

Counts to depth N include all moves reachable at lower depths.

Inaccuracies may also exist due to hash collisions, but Ai Ai uses 64-bit hashes so these will be a very small fraction of a percentage point.

No solutions found to depth 3.

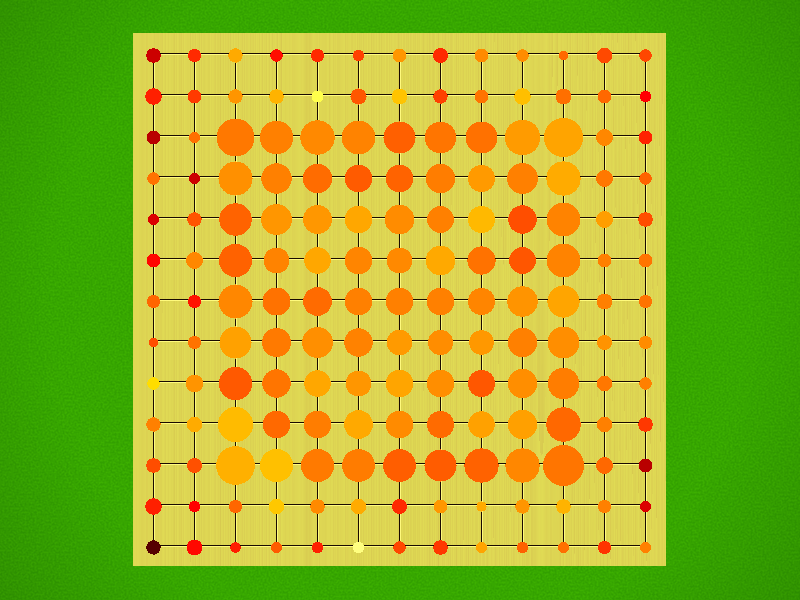

Colour shows the success ratio of this play over the first 10moves; black < red < yellow < white.

Size shows the frequency this move is played.

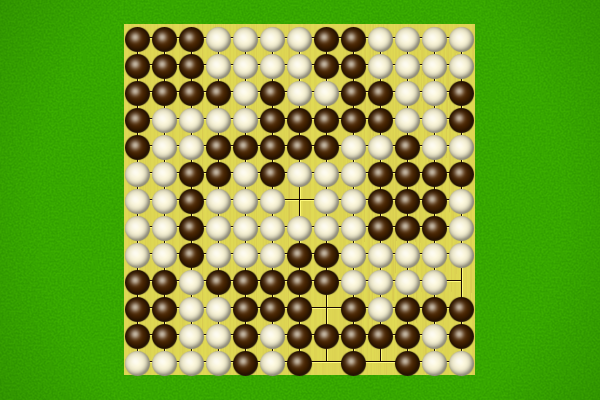

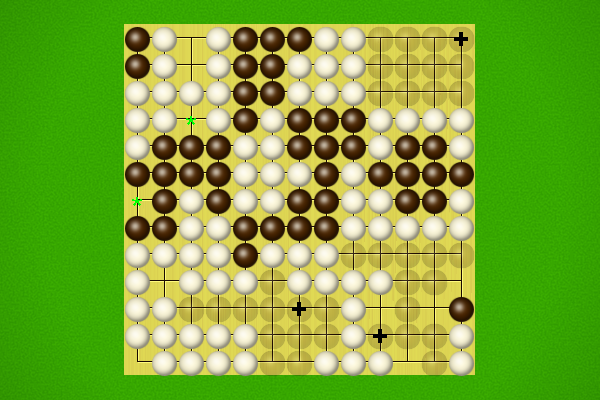

| Puzzle | Solution |

|---|---|

Black to win in 5 moves | |

Black to win in 5 moves | |

Black to win in 7 moves | |

Black to win in 10 moves |

Selection criteria: first move must be unique, and not forced to avoid losing. Beyond that, Puzzles will be rated by the product of [total move]/[best moves] at each step, and the best puzzles selected.