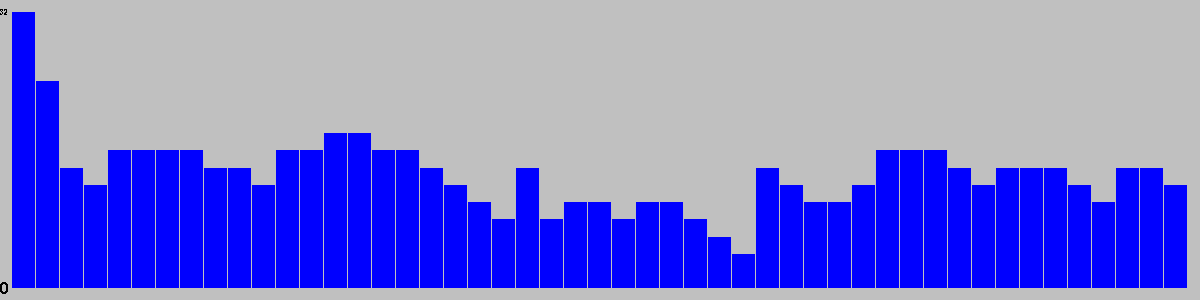

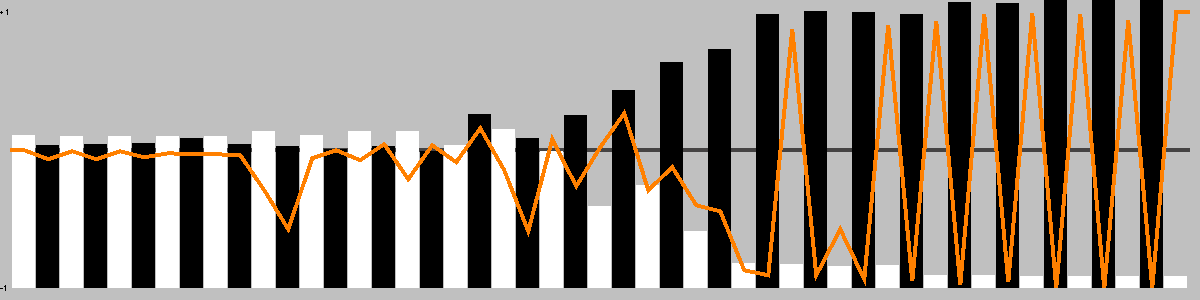

| User | Rating | Comment |

|---|

| TheAdamGlass | 8 | |

| tjgames | 10 | Hey I made it, so I have to give it a 10 right. Well, the truth is if I didn't I probably give it at least an 8 more likely a 9. I love playing this game and I still play it via SuperDuperGames.org regularly. Drop by and tell them BlueIstari sent you. |

| mrraow | 7 | Quick, clever game on a small board with shared pieces. |

| Graphia_69 | 7 | |

| MyOtheHedgeFox | 7 | |

| mattcurtis89 | 7 | |

| minarelli | 8 | |

| balduran26 | 8 | |

| Playbazar | 8 | |

| metallaio | 10 | always in my backpack |

| viszla | 6 | |

| PBrennan | 5 | You'd probably call this a micro-abstract, kind of a mini-GIPF meets Connect-4, aiming to get 4 in a row in the same colour in a 4x4 grid. Each time you do you keep one of those tokens as your score and the rest of the row gets wiped. Eventually the pieces run out and whoever's scored the most rows wins. The cool thing was that you could play a token in either colour, and then each orthogonally adjacent token fiipped to the same colour. That certainly made things a bit thinky. It was probably just inexperience and look-ahead failure, but we kept getting into situations where it didn't matter what you played, the opponent was going to score, and that lessened the game for us. I'm not a huge fan of abstracts to begin with so it didn't win me over, but I didn't mind playing it either. |

| jeep | 7 | |

| thenexusgame | 7 | |

| Aslantr | 7 | |

| maruXV | 9 | |

| Baartoszz | 5 | |

| cerulean | 7 | A standout among four-in-a-row games. Look before you leap! |

| The Duke of Kestrel | 7.5 | |

| MithrasSWE | 6 | |

| glitchhike | 8 | |

| arkonnen7 | 7 | |

| lele81 | 8 | |

| cyberpianta | 7 | |

| fabio | 7 | |

| nycavri | 4 | Hurts my head. |

| fivecats | N/A | Copy for review in GNM |

| Mal17 | 8 | |

| i7dealer | 7 | A nice abstract game. I think it's hard to see what's happening in your first game or two. It seems to be somewhat of a game of errors, in that you manuever not to give your opponent anything, until a row is forced to be given up. |

| Woodstocky | 7 | |

| Wentu | 6.3 | Extremely simple and fast abstract with nice components. First time I see the little bag for the pieces doubling as a board. That's smart! The game is very simple and interesting but I am convinced it has a too small decision tree and it could be easily solved. |

| SirFantato | 8 | |

| anovac | 5.5 | |

| AndreaGot | 7 | |

| unic | 5 | |

| Cekufrombeyond | 6.5 | |

| codinh | 7.5 | I'm amazed how such a simple game with basic components can bring something new to the table. WG is very clever in its mechanic, and requires a few plays to really get it. After that, it's a neat abstract game with a low footprint and very portable (the bag is the playing field!), easy to learn but hard to master. Good for a quick game and as a smart puzzle with your spouse, a friend or whoever you want. |

| mnemonicuz | 5 | A pretty good abstract 4-in-a-row game where you have to focus on not setting your opponent up for a score rather than score out yourself. It works well, but I think the games in the GIPF series does most aspects of this game better. It also has a nice starving mechanism where the game will end when there are no more pieces to add, but again, the GIPF games does it better in both starving as well as self-penalizing for catch-up when you score. |

| Striton | 8 | I really love this game. I've been trying to pin down why, exactly, because the mechanics are quite simple. Maybe it's just "where I am" right now in my gaming, but I find this game a breath of fresh air. I highly recommend it. |

| teppei978 | 8 | |

| Niart | N/A | sehr elegantes kleines Spiel - Komponenten meiner Meinung nach gut ausgenutzt |

| Abdul | 7 | Connect Four meets Othello. Satisfying amount of brain burn for such a compact package. Recommended if you like abstracts. |

| Solipsiste | 7 | |

| scih | 5.5 | |

| LL81 | 5 | |

| _Randi_ | 7 | [RESERVED] 9€ The game and its components are good, but... green + red ?! it's not a color blind friendly choice at all! :( Next time maybe use blue + red or choose different flower's designs. (my copy came with 17 seeds, so I use the extra one as the tie breaker, instead of the Wizard's Tome a.k.a. the rulebook) |

| scarze86 | 6 | |

| Iolanda | 9 | |

| MatteoNeil | 7 | |

| Tritrow | 5 | A more complex version of connect four. Interesting idea and plays fast but at the end of the day it's nothing special. |

| El Sapo | 9 | |

| Talisinbear | N/A | Outstanding |

| Throglok | 8 | |

| dralius | 5 | |

| KubaP | 8.2 | A VERY smart abstract. Really good. |

| aL3s5aNdRo | 6.7 | |

| ruitz | 7 | |

| Kytty | 6 | PnP from about.com Nice little twist using Reversi pieces |

| reolon1996 | 6 | |

| herace | N/A | Wishlist(3) = Print & play game -or- Never was a boxed set |

| bgg15 | 7 | |

| Nischtewird | 6 | 16E in Libraria |

| draghetto | 5.5 | |

| glaurent | N/A | Playable using 4x4 grid, othello pieces, pawn. |

| TheLadyOfWaterdeep | 6 | |

| daveo1234 | 7 | Another one of the "bag is the board" games. Fun little abstract. After two plays, we definitely got better at it as we went. It's fun, especially when first learning it. I have a nagging feeling that it's solvable though. We'll see! (maybe:) 2 2p - 7 |

| Migio | 7.5 | |